Customer Decisioning Blueprint [Part 7/7]

Customer Decisioning Blueprint: How to convert Data, AI and Context into Real-Time Customer Action

As we enter the new year, I would like to wish all readers Happy New Year. I look forward to spending more time on MarTech Square in the year ahead, developing these ideas further and engaging more deeply with the community.

Re-establishing the premise

Throughout this series, the Customer Decisioning Blueprint has been presented as a structural response to a persistent organisational challenge: the inability of enterprises to make consistent, coherent, and customer-appropriate decisions at scale, despite significant investments in data, analytics, and marketing technology.

One aspect of the blueprint may appear counterintuitive given current industry discourse. Artificial intelligence is not positioned as the central organising concept. While AI features throughout the blueprint - particularly within decisioning intelligence, optimisation, and learning, it is treated as an enabling capability rather than the defining principle.

This is a deliberate design choice.

The blueprint is concerned primarily with decision architecture: how decisions are framed, governed, executed, and learned from across time and channels. AI materially affects how decisions can be made, but it does not, on its own, resolve questions of intent, accountability, governance, or trust. These questions remain structural rather than technological.

This final essay examines how the blueprint evolves as AI capabilities mature, particularly with the emergence of agentic systems and autonomous agents. It argues that while AI will significantly reshape execution, the blueprint’s underlying logic remains necessary - and, in some respects, becomes more critical.

The Customer Decisioning Blueprint till date

The series has progressively built the blueprint as a complete operating system rather than a collection of isolated capabilities:

Part 1 examined the historical roots of the Decisioning gap and why organisations struggle to translate data into consistent action.

Part 2 introduced the foundational principles and the Level 0 Customer Decisioning Blueprint.

Part 3 defined the Enterprise Decisioning Base, comprising data foundations, decisioning intelligence, and business context.

Part 4 explored the Decisioning Orchestration Spine, the operational layer that connects decisions to channels, journeys, and moments.

Part 5 covered the Decisioning Governance & Adaptation Loop, the layer that sustains, governs, and institutionalises customer decisioning over time.

Part 6 is the convergence piece and brought all of the above elements together into a single, coherent system.

Why AI is not the centre of gravity

Across all six parts, a consistent position has been maintained: AI is a capability within the system, not its centre of gravity.

Today’s marketing and customer engagement literature frequently positions AI as the core transformation lever. In practice, enterprise reality is more nuanced. Most organisations already employ AI in some form- propensity models, recommendations, next-best-action logic, or optimisation algorithms, yet few would claim to have achieved mature customer decisioning.

The limiting factor is rarely model sophistication. Instead, it is the absence of:

clear decision ownership

consistent decision logic across channels

alignment between strategy and execution

enforceable governance and trust mechanisms

AI amplifies both strengths and weaknesses within an organisation’s decisioning system. Without an explicit decisioning framework, increased autonomy often leads to fragmentation rather than coherence. For this reason, the Customer Decisioning Blueprint treats AI as a component within a broader system.

The future evolution of AI does not invalidate this approach; it reinforces it.

Industry research consistently highlights the growing gap between the speed of AI capability development and organisational readiness to adopt it effectively. According to Gartner, the mass proliferation of AI providers launching agentic models, agentic-integrated platforms and other agent-infused products far exceeds the present demand.

Customer Decisioning will evolve with AI

Customer decisioning will inevitably evolve as artificial intelligence becomes more capable and more autonomous. However, this evolution should not be understood as a replacement of existing frameworks, but as a reconfiguration of how work is distributed between humans and machines. As has occurred in prior technological shifts, the core structures of work often persist, while the location of effort, judgement, and control changes. Decisioning frameworks therefore remain necessary, but they must be interpreted dynamically rather than statically.

Reinventing Jobs argues that new technologies do not simply automate existing jobs but require organisations to rethink work at the level of tasks, workflows, and accountability. The authors describe a fundamental “reshuffling” of work - where activities are redistributed across humans, machines, and systems based on comparative advantage rather than legacy role design.

The introduction of the famous moving assembly line at Ford Motor Company for their Model T, did not eliminate manufacturing work; it restructured it around flow, specialisation, and control.

Customer decisioning now faces a comparable inflection point. AI does not invalidate decisioning frameworks; it changes who or what performs specific decisioning tasks within them. The question is not whether the blueprint remains relevant, but how its components are enacted as intelligence becomes increasingly agentic.

What changes and what remains the same

Historically, AI in customer engagement has been largely reactive. Models were trained and invoked within predefined processes, with humans responsible for designing journeys, configuring rules, initiating decisions, and interpreting outcomes.

Agentic AI represents a structural shift. Agents can initiate actions, coordinate with other agents, negotiate trade-offs, and adapt behaviour continuously. As a result, the central question is no longer whether AI supports decisioning, but how increasing autonomy is structured, constrained, and governed within a coherent framework.

Importantly, autonomy extends rather than replaces decisioning logic. Where traditional systems generated scores or recommendations, future systems will increasingly coordinate across domains, resolve competing objectives, and optimise decisions in real time. This pattern is already evident in broader enterprise adoption of AI-driven workflow automation.

The near future: agent-assisted decisioning

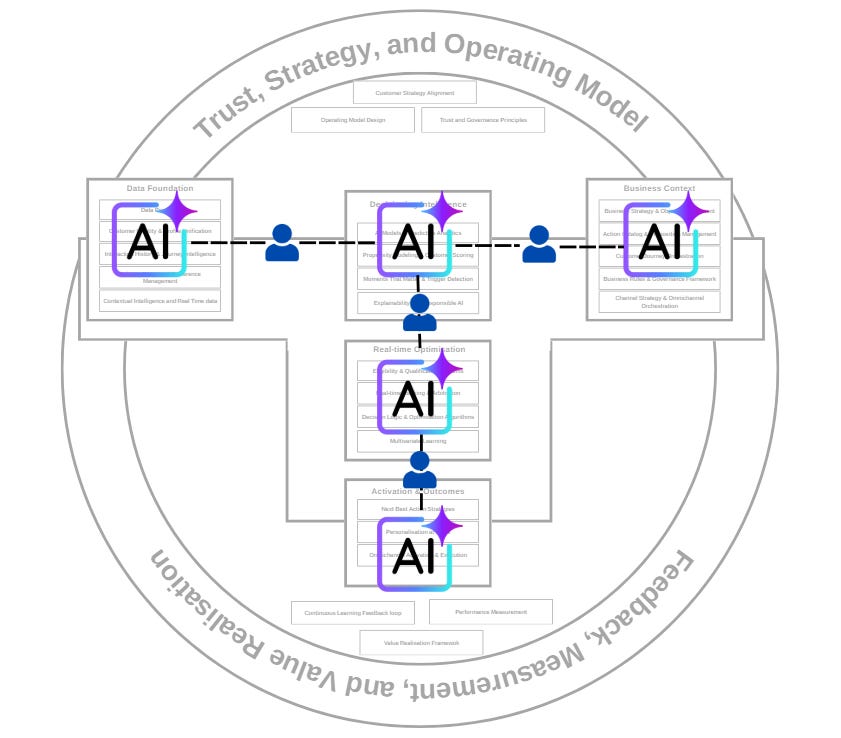

In the near term, each major component of the Customer Decisioning Blueprint will evolve to incorporate specialised AI agents that assist rather than replace humans.

This manifests in several ways:

Data Foundation agents responsible for identity resolution, consent interpretation, contextual assembly, and data quality enforcement

Decisioning Intelligence agents generating predictions, eligibility assessments, and treatment options

Real-Time Optimisation agents performing arbitration, simulation, and trade-off analysis

Activation and Outcomes agents adapting execution and capturing response signals

Business Context agents interpreting objectives, constraints, and prioritisation logic

In this configuration, AI agents operate within bounded domains. They assist by producing options, assessments, and recommendations. Importantly, responsibility for cross-domain coherence remains human.

Humans continue to:

reconcile competing objectives

interpret system-level behaviour

validate decisions against strategic intent

intervene when exceptions arise

The blueprint functions as a shared structure that allows humans to reason about agent behaviour across components rather than within isolated tools.

Early forms of this shift are already visible. AI-assisted content generation and predictive customer analytics increasingly translate data signals into operational decisions at scale, improving consistency and responsiveness while remaining under human governance.

Why this transitional phase is critical

Agent-assisted decisioning is often viewed as a temporary step toward full autonomy. In practice, it is where organisations develop governance capability. Without explicit frameworks, predictable risks emerge: local optimisation, constraint conflicts, and loss of decision traceability.

Most large-scale AI failures are not driven by incorrect predictions, but by weak oversight and misaligned incentives. Agent-augmented decisioning allows these risks to surface while humans remain meaningfully involved, validating whether the underlying decisioning architecture is robust before autonomy increases.

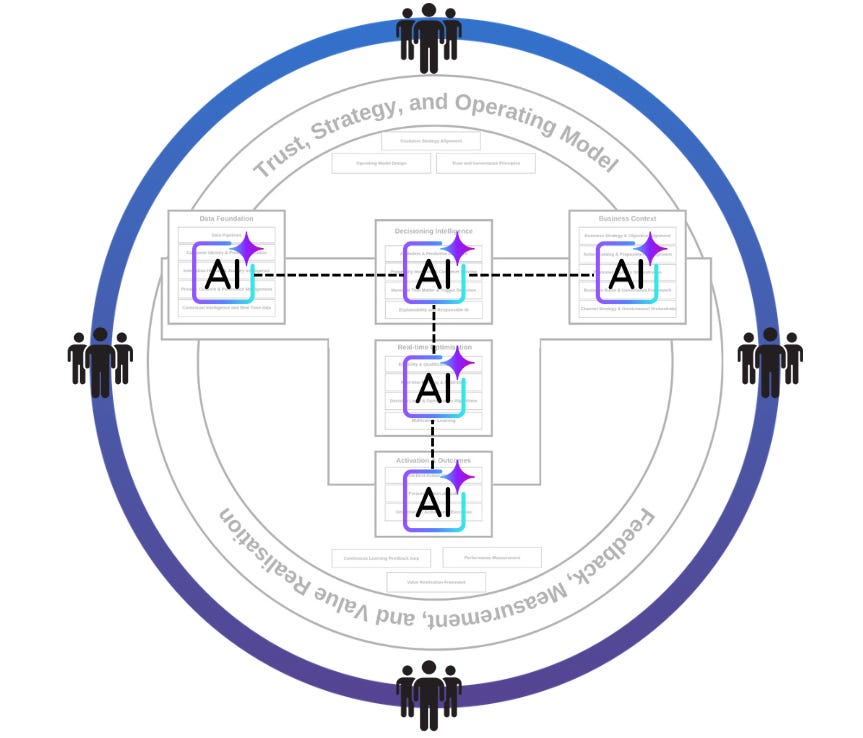

The not-so-distant future: autonomous decisioning systems

As AI agents increasingly coordinate across components, a second inflection point emerges: agents that converse with agents.

In this phase, the execution pattern shifts:

AI agents make decisions autonomously within defined boundaries

Humans step back from tactical choice to focus on framework stewardship

The operating model emphasises intent shaping rather than scenario steering

This shift is analogous to the evolution of autonomous optimisation in supply chains or financial systems, where algorithmic control of routine decisions allows humans to focus on strategy, governance, and edge-case escalation. Various industry forecasts now frame agentic AI as capable of autonomous workflow orchestration, predictive optimisation, and dynamic response adaptation across various domains.

We argue that this is the not-so-distant future of customer engagement and marketing.

A redefinition of human responsibility

In autonomous systems, human involvement shifts fundamentally. Humans are no longer responsible for individual decisions; they become stewards of the decisioning framework itself. This includes defining boundaries, setting ethical and brand constraints, establishing escalation logic, and auditing outcomes for alignment and trust.

Governance is not an administrative burden in this context; it is the core design principle that enables autonomy to scale safely. As decisions become less observable in real time, the blueprint’s emphasis on trust, strategy, and operating model becomes even more important.

Why the blueprint is critical

The Customer Decisioning Blueprint does not prescribe technologies or vendors. It defines decisioning as a discipline - a set of structural choices that determine how decisions are framed, governed, executed, measured, and evolved.

Whether AI remains assistive or becomes agentic, the blueprint remains relevant because stewardship cannot be delegated to models, trade-offs require human judgement, and accountability and trust remain organisational responsibilities. AI can optimise within frameworks; it cannot define their legitimacy.

Autonomous future

As organisations navigate a future where decisions are increasingly autonomous, it is essential to distinguish between technological capability and architectural integrity.

In the near term, AI agents assist decisioning systems while humans maintain integrative oversight.

In the not-so-distant future, AI agents will coordinate decisions autonomously, and humans will steward the framework within which those decisions occur.

The Customer Decisioning Blueprint provides a stable conceptual foundation for both contexts. It ensures that, as autonomy increases, decisions remain predictable, accountable, aligned with organisational intent, and trustworthy from the customer’s perspective.

Without such a blueprint, decisioning risks devolving into disconnected optimisation. With it, organisations can realise coherent, scalable, and sustainable value from both technology and human judgement.

Completing the Blueprint Series

This essay brings the Customer Decisioning Blueprint series to a close. Across these instalments, the intent has been to articulate customer decisioning as a coherent, system-level capability rather than a collection of tools, models, or execution tactics. The blueprint has been presented not as a prescriptive solution, but as a conceptual foundation that practitioners can use to reason about decision quality, governance, and scale in increasingly complex environments.

I hope this series has provided a useful lens for leaders and practitioners seeking to move beyond fragmented personalisation and toward more deliberate, trustworthy, and sustainable decisioning practices. As customer engagement continues to evolve under the influence of AI and automation, the need for clear decisioning architectures and human stewardship will only become more pronounced. It is in that context that this blueprint is offered - as a starting point for more disciplined thinking and more coherent action.

Requests

I’d love your feedback - it only takes a minute! Tell me what you think and what you’d like to read next. This is the Survey Link.

If you are a Customer Decisioning leader or practitioner whether on the brand side or vendor side, I’d love to have a chat with you about an upcoming project. Please feel free to drop me an email at pawan[@]martechsquare.com

![[Image Alt Text for SEO] [Image Alt Text for SEO]](https://substackcdn.com/image/fetch/$s_!x6zM!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F39351b9c-4560-410a-aff4-7031e442eda4_825x450.png)